Troubleshooting: Diagnostics, Errors, and Logs¶

We work hard to ensure that CodeScene just works. This means you point CodeScene to your codebase, press a button, and get the analysis results.

If you’re facing an unexpected issue or application behavior, you can use detailed analysis diagnostics and logs to gather more data and share them with Empear support.

Analysis Errors¶

On the rare occasion when an analysis fails, we make sure you know about it so that you can take corrective actions.

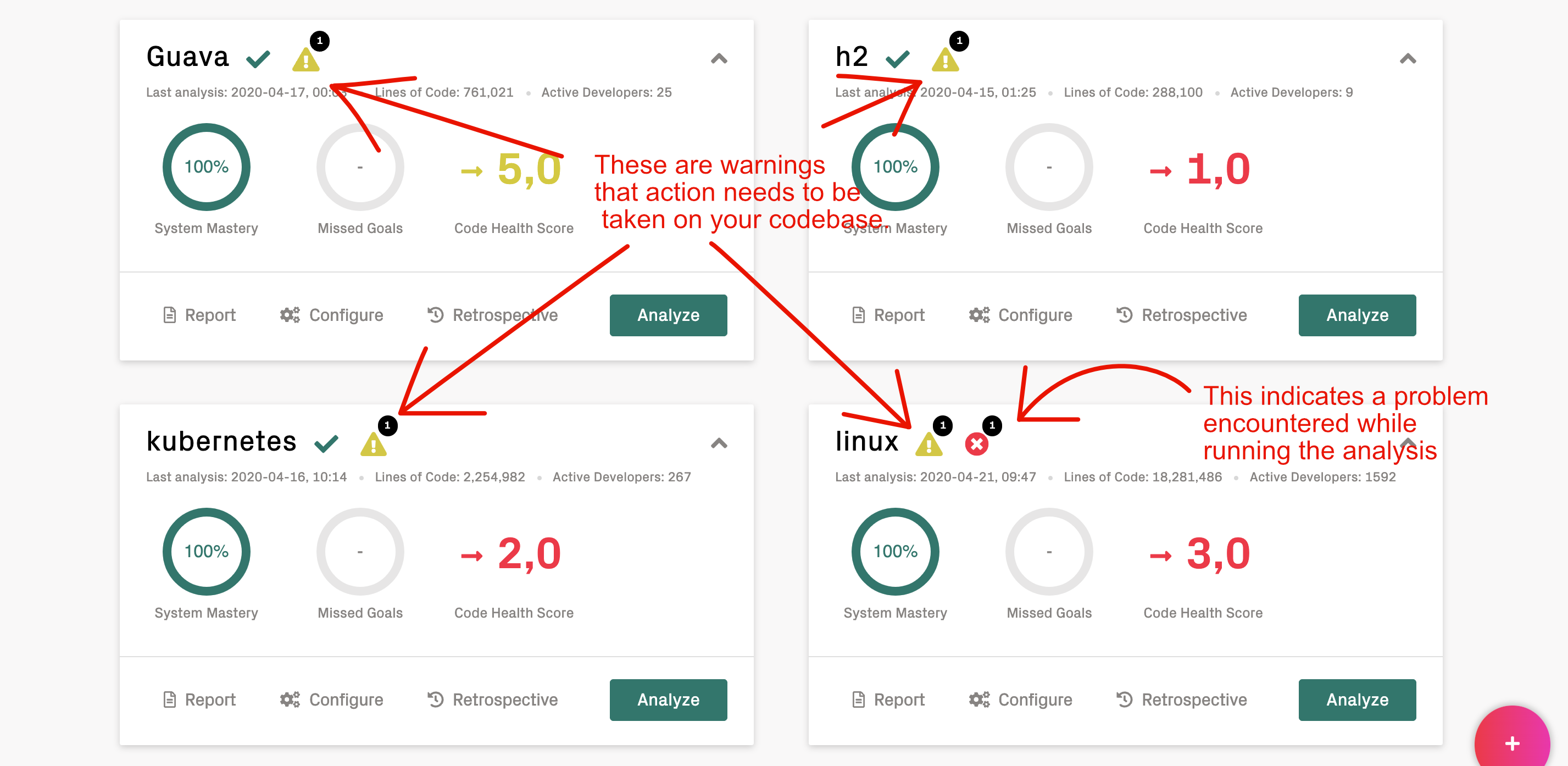

On the main landing page, CodeScene displays two kinds of notifications:

The first notifications, with the triangular warning sign, are not for troubleshooting CodeScene. They concern the codebases being analyzed: CodeScene has detected something that requires your attention. It’s time to check the analysis dashboard.

The second kind indicate that there was a problem when running the analysis.

Fig. 16 There are two kinds of notifications on the main landing page.¶

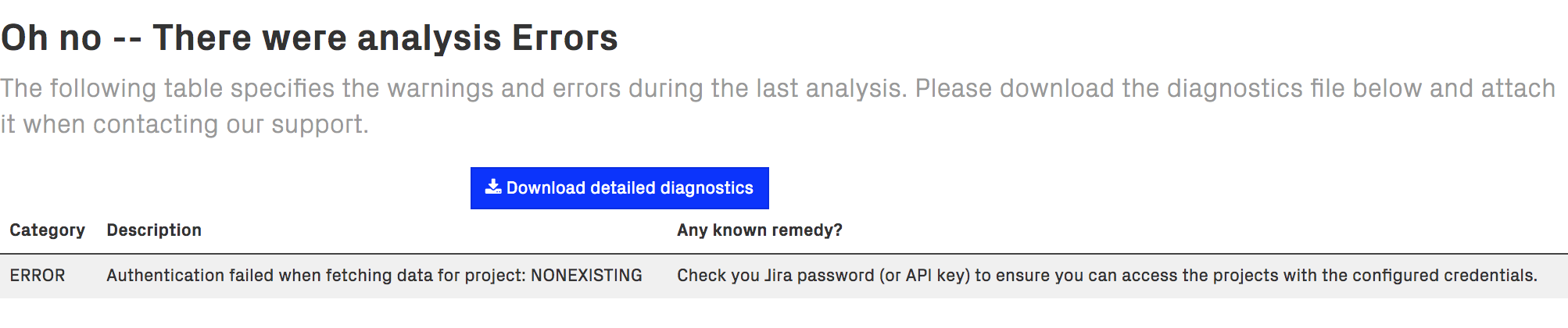

When you click on the analysis error icon, you can see what the problem or problems are.

Fig. 17 Click on the warning and error icons to retrieve the detailed diagnostics.¶

We distinguish between errors and warnings during an analysis:

Errors: An analysis error means that we couldn’t complete the analysis since we didn’t manage to fetch all the data we need. This is often due to an external data source that isn’t available. Examples include 3rd party integrations such as Jira.

Warnings: A warning means that the analysis completed but we did identify some conditions that requires your attention. A common example is that the Git repositories couldn’t be updated, which means that your latest code changes might not be reflected in the analysis. Another example might be parser errors when scanning the source code.

We do our best to keep the error messages informative. Please get in touch with our support if an error message or remedy isn’t clear – we consider error messages that are hard to understand an internal error, and love the opportunity to improve them. Click on Download detailed diagnostics to retrieve a file that can be shared with Empear for further inspection.

Logs¶

CodeScene logs contain important clues about errors and the application behavior and it’s always a good idea to attach them to your support requests.

Log levels¶

The default log level CodeScene uses is INFO.

To enable more detailed logging you have two options:

Set

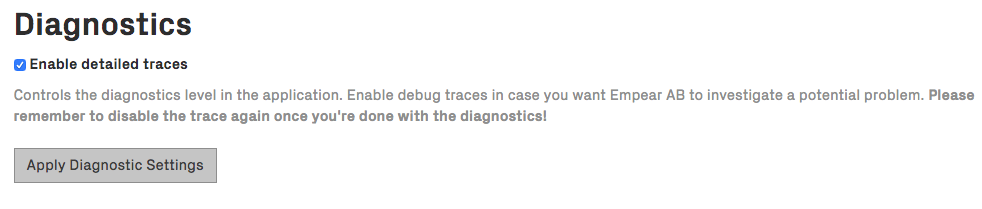

CODESCENE_LOG_LEVELenvironment variable: supported levels are ERROR, WARN, INFO, DEBUG, TRACE. INFO is a good default level but make sure to check the volume of logs CodeScene generates. This setting requires a restart.Check Enable detailed traces in Configuration -> System, as shown in the next figure. Note that this sets log level to TRACE which is very verbose. It’s useful for a temporary debugging session. This setting doesn’t surive a restart.

There’s also a special log config var CODESCENE_LOG_HTTP_BODY (‘true’ or ‘false’ (the default)) for logging HTTP request/response bodies. It can be useful for temporary debugging of tricky issues.

To have an effect, it also requires the log level to be DEBUG or TRACE (set either via CODESCENE_LOG_LEVEL or using the Enable detailed traces feature).

Be aware that this can produce a lot of log data and log sensitive information like passwords or API keys so it should be used sparingly and only for a brief period of time.

Where can I find the logs?¶

CodeScene logs to standard output.

The way you retrieve logs depends on how you run it:

Standalone JAR: standard output - you may want to redirect it to a file.

Docker container: retrieve logs via the

docker logscommand.Tomcat: logs are stored in the

<TOMCAT_HOME>/logs/directory. There’s usually a single big catalina.out file which contains aggregated logs from the beginning or a particular point in time (after you cleared the log). There are also many localhost.YYYY-MM-DD.log files which can contain other useful information, especially about deployment-time errors.

PR Integration error when runing CodeScene with docker-compose¶

While setting up the PR Integration for your project, on rare ocasions CodeScene might fail with exception:

sun.security.validator.ValidatorException: PKIX path building failed:

sun.security.provider.certpath.SunCertPathBuilderException:

unable to find valid certification path to requested target

In most cases, this happens because the certificate provided by the host URL is self-signed or one of the chain certificate is not trusted. The workaround is to create a local trust store and import the host URL certificate and the CA chain following the guide below:

Get the certificate from the host URL:

openssl s_client -showcerts -connect myhost.com:443 < /dev/null | openssl x509 -outform pem > mycertificate.pem

Make a folder to save the store:

mkdir store

Create the keystore (you will be asked to set a password, also answer yes when asked to trust the certificate):

keytool -import -alias mykey -keystore store/mytruststore.ks -file mycertificate.pem

Import the java cacerts. For Mac OSX, the source password is empty (just press enter), the destination password is the one set on step 3; for other operating systems, you should adapt the srckeystore path value.

keytool -importkeystore -srckeystore $(/usr/libexec/java_home)/lib/security/cacerts -destkeystore store/mytruststore.ks

You should now have a truststore in:

store/mytruststore.ks:Edit

docker-compose.ymland addJAVA_OPTIONSand the volume containing the store (replaceyourpasswith the password set in step 3).

environment:

...

- JAVA_OPTIONS=-Djavax.net.ssl.trustStore=/store/mytruststore.ks -Djavax.net.ssl.trustStorePassword=yourpass

...

volumes:

...

- ./store:/store

...

Advanced diagnostics & profiling¶

jcmd¶

jcmd is a diagnostics command-line tool shipped with JDK. It can be used to quickly gather various data (GC metrics, VM info, etc.) about running java application including CodeScene.

To use jcmd with CodeScene running in a Docker container you can leverage jattach utility. It’s pre-installed in our Docker image.

docker exec -i -t $(docker ps | grep 'codescene' | head -n 1 | cut -d ' ' -f1) /bin/bash

jattach $(pgrep java) jcmd help

# e.g. to get heap statistics

jattach $(pgrep java) jcmd GC.heap_info

Connected to remote JVM

JVM response code = 0

garbage-first heap total 326656K, used 154735K [0x00000000b5800000, 0x0000000100000000)

region size 1024K, 21 young (21504K), 8 survivors (8192K)

Metaspace used 176770K, capacity 179022K, committed 180028K, reserved 1191936K

class space used 36902K, capacity 37745K, committed 37832K, reserved 1048576K

Java Flight Recorder (JFR)¶

JFR is a powerful diagnostics and profiling tool that ships with every modern JDK version (including JDK 8). Continuous JFR profiling is a low-overhead (<1 %) profiling mode that can be enabled in production without worries.

Our Docker image enables JFR profiling by default and stores JFR recordings in the /codescene/diagnostics/jfr-dumps/ directory (look for files names hotspot-pid-**).

The recordings are dumped whenever the app is (gracefully) stopped or when the in-memory recording size reaches the 100 MB threshold.

You can customize the directory where the recordings are stored via

JFR__DUMPS_DIRenv var (notice two underscores after JFR).The old recordings are removed when the used disk spaces goes over the configured limit (1 GB). You can customize the limit via

JFR__MAX_DUMPS_SIZE_IN_MBenv var.

You can also start and dump JFR recordings with jcmd (see the previous section).

The following example shows how to use jcmd & JFR within a docker container:

jattach $(pgrep java) jcmd JFR.check

Connected to remote JVM

JVM response code = 0

Recording 1: name=1 maxsize=100.0MB (running)

jattach $(pgrep java) jcmd JFR.dump

...

Dumped recording, 8.6 MB written to:

/codescene/hotspot-pid-32-2021_12_16_09_55_45.jfr

When you have a JFR recording (.jfr file) you can open it and analyze with Java Mission Control (JMC) gui application.